TLDR;

This paper built an AI generative system with Explainable AI (XAI), paving a way of applying it on real-world applications.

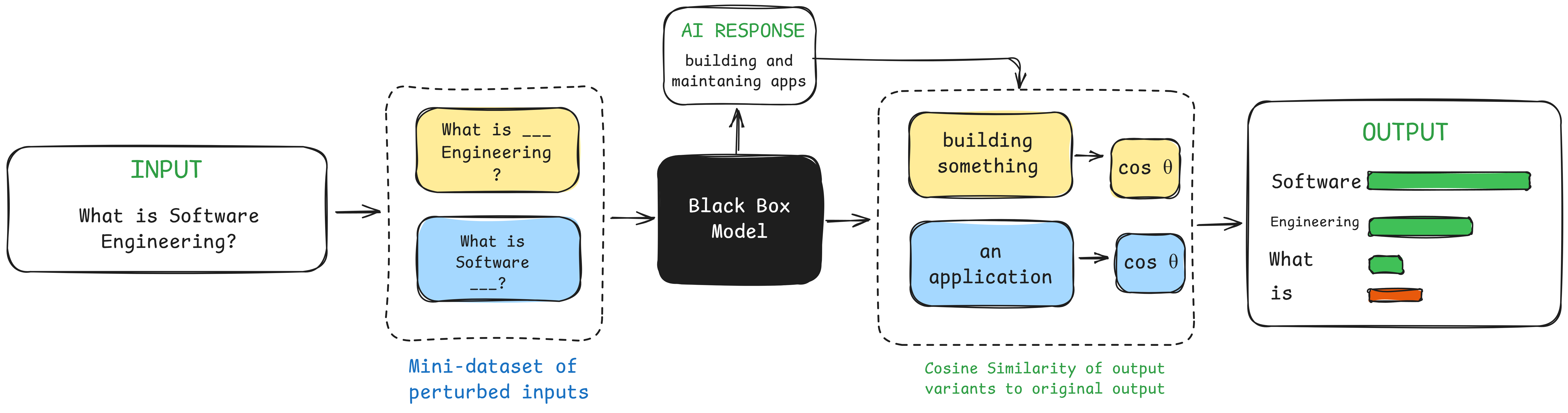

As artificial intelligence becomes increasingly integrated into high-stakes decision-making across healthcare, finance, and education, the opacity of black-box models presents critical challenges for user trust and adoption. This study addresses this gap by introducing XeeAI, a web-based chatbot system that integrates Local Interpretable Model-Agnostic Explanations (LIME) with Gemini 2.5 Pro to provide real-time, token-level visual explanations of AI-generated responses. Employing a quasi-experimental mixed-methods approach grounded in Human-Computer Interaction principles and Li et al.'s three-dimensional trust framework (Trustor, Trustee, Interactive Context), the research evaluated 100 participants—comprising undergraduate students, faculty, and AI practitioners—through A/B testing with stratified random sampling. Group A (n=45) interacted with LIME-enhanced visualizations while Group B (n=45) used the system without explanations, with additional expert evaluations from faculty and AI engineers. Results demonstrated statistically significant improvements in user trust across all dimensions (p<0.001), with Z-statistics exceeding 9.6 for all trust categories. The system achieved an Area Under Perturbation Curve score of 71.6%, confirming high local fidelity of explanations, while usability assessments yielded excellent ratings (SUS score: 87.64; ISO 25010 metrics averaging 3.9/5.0). Qualitative thematic analysis revealed that transparency and explainability were the most valued features, with users reporting enhanced comprehension and confidence despite minor performance trade-offs from real-time explanation generation. This work demonstrates that thoughtfully designed XAI visualizations can effectively bridge the gap between complex AI systems and diverse user populations, providing empirical evidence for developing more transparent, trustworthy, and user-centered AI applications essential for responsible AI deployment in real-world contexts.

Lorem

Lorem

Lorem

BibTex Code Here